“There’s no other film that I’ve worked on or probably will ever work quite like Avatar,” says production sound mixer Tony Johnson. “It’s unique and in many respects I wouldn’t liken it to regular filmmaking. The technology, language, and processes Jim [James Cameron] uses are specific to the Avatar world.”

Johnson, a New Zealand native whose credits includes Peter Jackson’s The Hobbit trilogy, has been immersed in Pandora since the production of the original film dating back to 2007. Avatar was released two years later, shattering box office numbers, earning nine Academy Award nominations including achievement in sound mixing. In 2017 the much-anticipated sequel Avatar: The Way of Water (2022) went into production with the added complexity of simultaneously shooting the third film Avatar: Fire and Ash (2025).

“There was a core group of people, myself included, that were from the original film and went right through. And it’s intense because when you’re making it, it’s highly technical as Jim is very specific and detailed on the workflow,” notes Johnson. “There are lots of job titles that would never be on another film and then there are other hurdles. Like you don’t get to read the full script, only the live-action. So in some ways you don’t know exactly what you’re doing but you have to adapt. I had some unique and exhilarating experiences that I would never normally do on another movie that I’ll never forget.”

Part of the tech hurdle was Cameron shooting the motion capture performances on dedicated stages at Manhattan Beach Studios where actors wore capture suits covered with markers. Their body movements were recorded by an array of cameras, while head mounted cameras recorded detailed facial performances. A virtual camera system allowed them to move through the digital world in real time where actors’ performances were translated into Na’vi characters within the CG environment. Overseeing the mo-cap production sound recordings on The Way of Water and Fire and Ash was mixer Julian Howarth and his team.

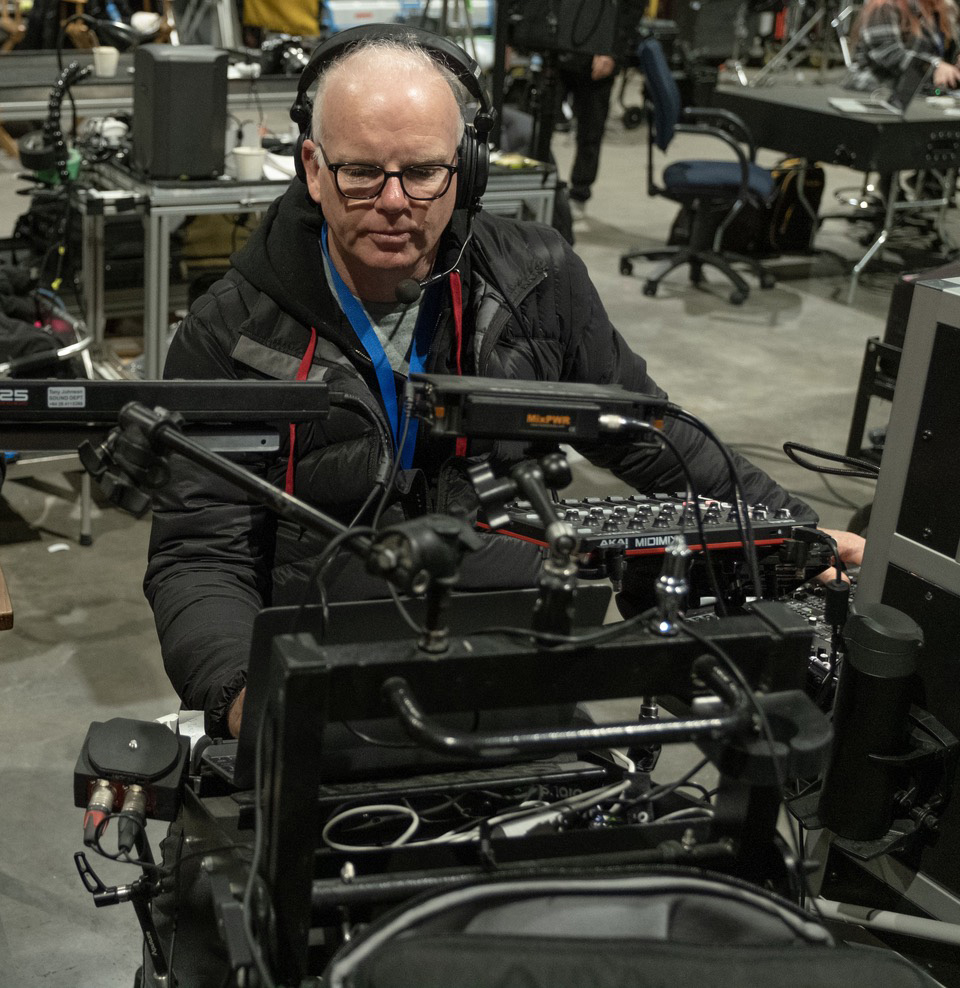

When production moved to live-action, sets were constructed at Stone Street Studios in Wellington, New Zealand (and other local locations) with Johnson overseeing the recordings. While his audio cart setup evolved over time, on Fire and Ash it included the Deva 24 and Sonosax ST10 mixer with Zaxcom RX-12 wireless receiver, ZMT4 transmitters, and TRX743 plug-on transmitters. Schoeps CMC641, the CMT-5U, and DPA 4017 shotguns were paired with DPA and Countryman lavs. For backup and breakaway recordings, Johnson had a Zaxcom Nomad with an Aria-8 and Aria-4 control surfaces that was upgraded to a Nova 2 during production. ProTools and Ableton Live were used for sound effects playback and Phonak Roger for in-ear monitoring.

Below, Johnson discusses how he approached recording dialogue, why oxygen masks played a key role, that massive Fire and Ash battle sequence, and how Zaxcom tech help made it possible.

Photo: 20th Century Studios

Recording Na’vi Dialogue

Standing 8-10 feet tall (2.7-3 meters) the Na’vi tower of humans. To achieve accurate eyelines, Cameron developed technology that enhanced realism, leaving production sound to faithfully capture dialogue and performance.

When we filmed live-action scenes between humans and Na’vi on Avatar (2009), we used the tennis ball on a pole method for the eyelines, but it never worked for Jim. For the sequels, they had this ingenious invention that was kind of like a cable cam setup. They would put posts on each corner of the set and run cables across. But instead of a camera, we mounted a tablet, a small omnidirectional speaker, and a wireless receiver to represent the Na’vi character.

In Fire and Ash, there are scenes where Quaritch [Stephen Lang] is talking to General Ardmore [Edie Falco] or Selfridge [Giovanni Ribisi] in a control room walking around. Post would edit the clip of Quaritch from the performance capture and it would then be transmitted to the tablet, allowing the human actors to interact with the Na’vi character at the correct height. The cable cam was programmed to move the same way as the performance capture. Jim wanted the dialogue to come from the same place as the character on the tablet, so we played it out of the speaker instead of using earwigs. The sound was routed through my mixer and transmitted to the receiver and speaker so Quaritch’s [or other Na’vi] dialogue could be heard on set. At the time, I thought it was a bit crazy and was concerned about the noise it would make, but it was really mind-blowing and surprisingly quiet. When you see those scenes they’re really well done.

The challenge was that the edited clips included breaths and effort as well as dialogue. You don’t want those because it can pollute the live-action dialogue. So I routed the Na’vi playback track to a single side of my headphones, pre-fader to hear those things coming up and I would dip them out. It was quite a unique experience because I was constantly listening to something that was full level while trying to monitor the human dialogue. A good thing about Jim is he rehearses, so we had plenty of opportunities to get it right. During rehearsals, I would solo the boom and the lavs to see how it was sounding, what the background was doing, the acoustics, everything. We were able to get issues sorted out before shooting.

Masking a Spider

When the humans evacuate at the end of Avatar (2009) Spider (Jack Champion) gets left behind and is raised by the Sully family. Pandora’s atmosphere isn’t safe for humans to breathe so he (and other humans) wears a mask to supply oxygen. Johnson evolved his approach to micing the mask through Fire and Ash.

When I started, I was using the Zaxcom ZMT3, which is great, with the Countryman B6 because at the time the DPA 6061 wasn’t available and the DPA 6060 was too high level. I needed a lower sensitivity microphone. Then on the sequels, we transitioned to a mix of B6 and DPA 6061s with the ZMT3 before going all Zaxcom ZMT4s and 6061s.

With Spider, he wore a wig with dreadlocks so the costume and hair departments made space for the ZMT3 (and later the ZMT4) hidden in the back of the wig. The lav was then threaded through a dreadlock on the side of his head which served us really well for the entire run.

When the humans wore oxygen masks there was a prop oxygen pack on their waists with a tube connecting the mask. We would thread the lav through the tube and bring it through the rubber shroud of the mask. The prop oxygen pack was hollowed out just enough to place the ZMT4 inside. The ZMT4s gave us a lot more battery life and better sound out of the 6061s in my opinion. What saved us was ZaxNet. I could adjust the levels and sleep the transmitters at my sound cart because we could never go into the pack to do anything, so that aspect was really cool.

We were also at the mercy of the front shield. If it was in during filming, we were screwed because the pressure on the diaphragm of the mic was never going to survive. It was just distorted. Luckily, we mainly worked without them installed because visual effects were going to add them later. It allowed for very little ADR on Avatar which is unusual for a movie like this. The setup allowed us to record all the breaths even when there was no dialogue.

In all, we had about 30 pre-rigged masks. Every actor had a mask and every actor had three different versions of a mask. Each mask had a name and Jim would call it out so we made sure it was ready to go. Katie Paterson, our second assistant sound, was in charge of the processing and working with costumes props supervisor Richard Thurston.

Creating Drama through Sound

Whether recording live-action or motion capture, sound effects heightened the emotion of scenes during filming.

Jim wants the realism to be there while directing especially with live-action. So we will play sound effects over dialogue scenes, mixing them in and out around the dialogue to place the actors in the situation, whether it’s them running scared for their lives, or a bomb going off. Jim wants the whole reaction.

So for the scene in Fire and Ash when Spider is running out of air and his mask is beeping, Jim wanted to bring drama to it. So I’m hitting a beeping effect to represent the mask running out of air while recording Spider’s dialogue. This allowed Spider to feel the fear of the situation as the set was all bluescreen with his mask being the only real prop in the scene. It helped create this buildup and give Jack [Champion] something to react to that would otherwise be nothing on set.

Julian [Howarth] did this a lot on the motion capture performance side. He provided sound effects and an audio soundscape behind what they were filming so the actors could feel immersed in it. We were always loading sound effects to play for reactions. For example, when a whale creature breaches the water and crashes onto a ship, we would play a sound effect of the whale and record the human character’s reaction. It also allowed the extras in the background to know what to react to. We did that a lot for gunfire, explosions, and everything else. In a movie where there’s a lot of blue screen, it can be very helpful.

An Epic Na’vi Battle

A climactic sequence in Fire and Ash has the Na’vi fighting the humans over the open ocean. Guns, explosions, whales, flying creatures, and dozens of actors fill the screen. Sound prepared for it all.

We were able to record all the main human characters with their mask lens out so we were able to mic them quite successfully. But if the scene was using background stunt actors who potentially had lines, they would have their lenses in and we couldn’t get anything usable besides a guide track of dialogue.

The massive challenge was when characters had to get wet on the whale hunting patrol boat called the Matador or on the massive Factory Ship that housed the command center with General Ardmore. Jim’s idea of spritzing actors down is to tip a bucket of water over their heads. So we had the Zaxcom transmitters in aqua packs with Countryman B3 or B6 lavs pointed down on the actors so the water would drain out of the capsule.

The Matador would be on a giant moving base and once the motion base was operating no one could go near it so it added another layer of complexity. One time though, our boom operator Sam Spicer, did arrange for a cherry picker to take him out over the Matador to place a boom overhead Mick Scoresby [Brendan Cowell] for a pivotal moment. So our plan was to have multiple microphones on actors or a mic planted on a gun, or somewhere in the set. Then if we could get close, we’d boom to cover the scene. There was also a huge logistic coordination element to that sequence and some shots would take an entire day so there is pressure to have all your bases covered. Then we had to install the whole communications for safety and so the actors could hear the director. We weaved our way through all of it.

Another situation where Zaxcom worked well was when we were filming on the motion base about 30 mins drive from Stone Street Studios where they were having a costume fitting for an actor. I sent Katie Paterson to the fitting with a Zaxcom ZMT4, her URX100, and a DPA 6061. She tried multiple placements for the lav which she listened to on the URX100 and recorded them all on the ZMT4. She then drove back to set where we downloaded the files into ProTools and all listened to the lav placements to decide on the best fit. This was all done with two pieces of equipment that all talk to each other and record and provide a great remote recording and monitoring options.

Tony Johnson’s Fire and Ash sound team included: Key first assistant sound Corrin Ellingford, who says “his contribution was immense and he was with me for the entire shoot,” boom operator Sam Spicer, and second assistant sound Katie Paterson. Second unit was shared by mixers Chris Hiles and Steve Harris, and second sound assistant Jessy McNamara. The sound intern was Hayden Washington Smith.

Julian Howarth’s Fire and Ash sound team included: boom operator Ben Greaves, sound utilities Jamie Gambell, Kayla Croft, and Zack Wrobel.

Fire and Ash post sound was led by supervising sound editors Gwendolyn Yates Whittle and Brent Burge with rerecoding mixers Gary Summers, Michael Hedges, and Alexis Feodoroff. Sound design by Hayden Collow and Christopher Boyes.